Be patient..... we are fetching your source code.

Objective

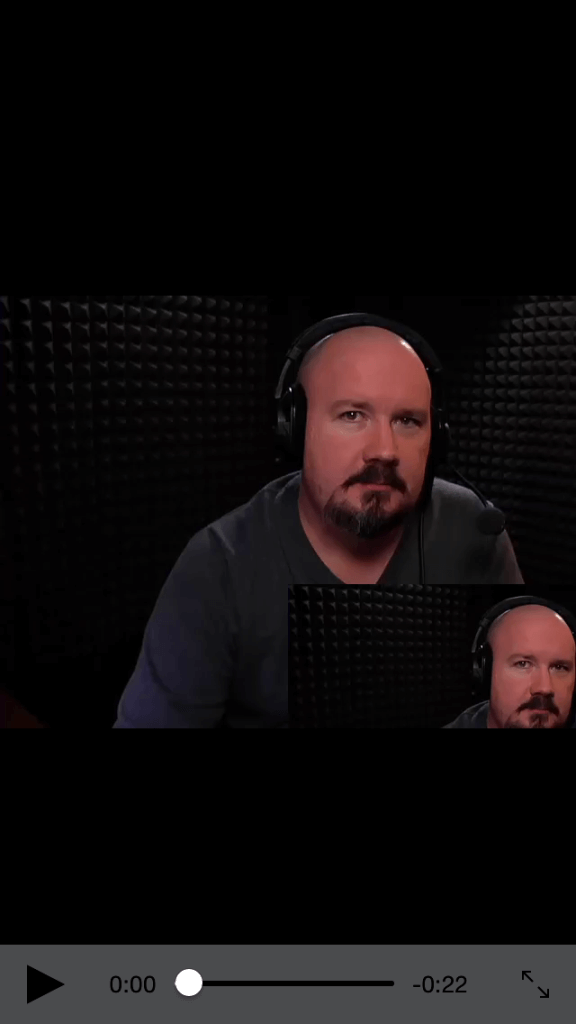

The main objective of this post is to describe that how to create a video by overlapping multiple videos side-by-side.

Here I demonstrate how two videos can be overlapped to create a single video using the in-built AVFoundation library.

Lets take a look on the basic idea behind this task.

Step 1 Create Xcode Project

First of all create an XCode project named VideoOverlapDemo and save it. This project will contain one ViewController.

Step 2 Add Library to Project

The basic need to overlap video is the AVFoundation library. So you have to link this library to your project. Next you should have any video file either in your project’s main bundle or anywhere else in your project i.e. in document directory, in temporary directory etc.

Step 3 Overlay code in viewDidLoad method

Now call the following method from the ViewDidLoad.

- (void) overlapVideos{

//First load your videos using AVURLAsset. Make sure you give the correct path of your videos.

AVURLAsset* firstAsset = [AVURLAsset URLAssetWithURL:[NSURL fileURLWithPath:[[NSBundle mainBundle] pathForResource:@"sampleVideo" ofType:@"mp4"]] options:nil];

AVURLAsset * secondAsset = [AVURLAsset URLAssetWithURL:[NSURL fileURLWithPath:[[NSBundle mainBundle] pathForResource:@"sampleVideo" ofType:@"mp4"]] options:nil];

//Create AVMutableComposition Object which will hold our multiple AVMutableCompositionTrack or we can say it will hold our multiple videos.

AVMutableComposition* mixComposition = [[AVMutableComposition alloc] init];

//Now we are creating the first AVMutableCompositionTrack containing our first video and add it to our AVMutableComposition object.

AVMutableCompositionTrack *firstTrack = [mixComposition addMutableTrackWithMediaType:AVMediaTypeVideo preferredTrackID:kCMPersistentTrackID_Invalid];

//Now we set the length of the firstTrack equal to the length of the firstAsset and add the firstAsset to out newly created track at kCMTimeZero so video plays from the start of the track.

[firstTrack insertTimeRange:CMTimeRangeMake(kCMTimeZero, firstAsset.duration) ofTrack:[[firstAsset tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0] atTime:kCMTimeZero error:nil];

//Repeat the same process for the 2nd track as we did above for the first track.

AVMutableCompositionTrack *secondTrack = [mixComposition addMutableTrackWithMediaType:AVMediaTypeVideo preferredTrackID:kCMPersistentTrackID_Invalid];

[secondTrack insertTimeRange:CMTimeRangeMake(kCMTimeZero, secondAsset.duration) ofTrack:[[secondAsset tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0] atTime:kCMTimeZero error:nil];

//See how we are creating AVMutableVideoCompositionInstruction object. This object will contain the array of our AVMutableVideoCompositionLayerInstruction objects. You set the duration of the layer. You should add the length equal to the length of the longer asset in terms of duration.

AVMutableVideoCompositionInstruction * MainInstruction = [AVMutableVideoCompositionInstruction videoCompositionInstruction];

MainInstruction.timeRange = CMTimeRangeMake(kCMTimeZero, firstAsset.duration);

//We will be creating 2 AVMutableVideoCompositionLayerInstruction objects. Each for our 2 AVMutableCompositionTrack. Here we are creating AVMutableVideoCompositionLayerInstruction for out first track. See how we make use of Affinetransform to move and scale our First Track. So it is displayed at the bottom of the screen in smaller size.(First track in the one that remains on top).

//Note: You have to apply transformation to scale and move according to your video size.

AVMutableVideoCompositionLayerInstruction *FirstlayerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:firstTrack];

CGAffineTransform Scale = CGAffineTransformMakeScale(0.6f,0.6f);

CGAffineTransform Move = CGAffineTransformMakeTranslation(320,320);

[FirstlayerInstruction setTransform:CGAffineTransformConcat(Scale,Move) atTime:kCMTimeZero];

//Here we are creating AVMutableVideoCompositionLayerInstruction for our second track.see how we make use of Affinetransform to move and scale our second Track.

AVMutableVideoCompositionLayerInstruction *SecondlayerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:secondTrack];

CGAffineTransform SecondScale = CGAffineTransformMakeScale(0.9f,0.9f);

CGAffineTransform SecondMove = CGAffineTransformMakeTranslation(0,0);

[SecondlayerInstruction setTransform:CGAffineTransformConcat(SecondScale,SecondMove) atTime:kCMTimeZero];

//Now we add our 2 created AVMutableVideoCompositionLayerInstruction objects to our AVMutableVideoCompositionInstruction in form of an array.

MainInstruction.layerInstructions = [NSArray arrayWithObjects:FirstlayerInstruction,SecondlayerInstruction,nil];;

//Now we create AVMutableVideoComposition object.We can add multiple AVMutableVideoCompositionInstruction to this object.We have only one AVMutableVideoCompositionInstruction object in our example.You can use multiple AVMutableVideoCompositionInstruction objects to add multiple layers of effects such as fade and transition but make sure that time ranges of the AVMutableVideoCompositionInstruction objects dont overlap.

AVMutableVideoComposition *MainCompositionInst = [AVMutableVideoComposition videoComposition];

MainCompositionInst.instructions = [NSArray arrayWithObject:MainInstruction];

MainCompositionInst.frameDuration = CMTimeMake(1, 30);

MainCompositionInst.renderSize = CGSizeMake(640, 480);

NSArray *paths = NSSearchPathForDirectoriesInDomains(NSDocumentDirectory, NSUserDomainMask, YES);

NSString *documentsDirectory = [paths objectAtIndex:0];

NSString *myPathDocs = [documentsDirectory stringByAppendingPathComponent:@"overlapVideo.mov"];

if([[NSFileManager defaultManager] fileExistsAtPath:myPathDocs])

{

[[NSFileManager defaultManager] removeItemAtPath:myPathDocs error:nil];

}

NSURL *url = [NSURL fileURLWithPath:myPathDocs];

AVAssetExportSession *exporter = [[AVAssetExportSession alloc] initWithAsset:mixComposition presetName:AVAssetExportPresetHighestQuality];

exporter.outputURL=url;

[exporter setVideoComposition:MainCompositionInst];

exporter.outputFileType = AVFileTypeQuickTimeMovie;

[exporter exportAsynchronouslyWithCompletionHandler:^

{

dispatch_async(dispatch_get_main_queue(), ^{

[self exportDidFinish:exporter];

});

}];

}

Step 4 Perform task on Overlapped Video

You can implement the following method if you want to perform any task on the overlapped video.

- (void)exportDidFinish:(AVAssetExportSession*)session

{

NSURL *outputURL = session.outputURL;

//here you have the output URL of the final overlapped video. Add your desired task here.

}

If you have any query related to how to overlap multiple videos in iOS please comment them below.

Got an Idea of iPhone App Development? What are you still waiting for? Contact us now and see the Idea live soon. Our company has been named as one of the best iPhone App Development Company in India.

I am iOS Developer. I like to learn new technologies. I believe that any fool can write code that a computer can understand but good programmers write code that humans can understand.

iOS - Social & MessageUI Framework

iOS - File Sharing Using WiFi